Kevel Launches Kai to Boost Performance Optimisation, Relevance & Revenue for Retail Media Networks

Kevel, the API-first ad-serving company, is announcing its new branded AI feature set: Kai (Kevel Artificial Intelligence), a suite of AI and machine learning technologies that enable performance optimisation and drive relevancy, profitability, and revenue. Kai is available as part of the Retail Media CloudTM, the ultimate SaaS platform for building retail media networks with ad-serving that maximises share of advertiser budgets.

The new tools were developed and spearheaded by Kevel’s AI/ML research group, chaired by CTO Tim Ewald, Sr. director of research and W3C member Paul DeGrandis, principal data scientist Richard Carter, PhD and Retail Media CloudTM GM and Velocidi founder Paulo Cunha. The group has decades of combined experience in AI, which has led them to develop this powerhouse suite of AI features to power ad serving and audience segmentation for a premium retail media experience.

With Kai, Kevel introduces two new features, Forecast and Custom Relevancy, alongside its existing AI Audience and DecisionAPI products. Kevel Forecast predicts inventory and campaign performance for existing and future campaigns using machine learning simulations to generate insights on both current and future ad flights.

Read more: TBWA’s “Collective AI” Redefines the AI Landscape

Paulo Cunha, Retail Media Cloud GM at Kevel explains,

“Forecast is a first of its kind for retail media. Traditional forecasting tools look simply at historical data to predict future campaign performance, whereas Kevel Forecast uses machine learning algorithms to project future campaign performance when considering all contextual and user audience targeting and pacing parameters in conjunction with other running or future ads. This way, advertisers always know exactly what their future performance looks like and retailers can maximise their inventory yield,”

Kevel’s Custom Relevancy allows for retailers to input their own AI/ML algorithms into Kevel Ad Server for custom targeting geared towards the individual performance of each network. Functioning as a unique ‘BYOM’ (bring your own model), Custom Relevancy helps retailers utilise their own advanced models to determine relevance as part of their ad stack in a safe and secure way.

Tim Ewald, CTO at Kevel commented,

“Retailers know their customers better than anyone else, but struggle to influence their ad serving with the exceptional AI-driven optimisation they use for promoting a customised user experience,”

“That all changes with Custom Relevancy, which allows customers to plug their own ML models into our ad decision process to dynamically adjust relevancy and improve ad serving a per-user basis.”

Kai encompasses not just new features like Forecast and Custom Relevancy, but existing features like ad decisioning and pacing. Kevel’s approach to pacing, delivery, and decisioning leans into historical data, events, previous behaviour, context of the experience, ads viewed, and relevance scoring, plus trends and predictions to drive ad performance.

Richard Carter, principal data scientist stated,

“What excites me about Kai is that it’s a set of features that showcases how machine learning can be used to deliver more value to our customers. We’ve developed these systems from original research using proprietary data sets, harnessing our many years of experience in ad serving,”

“We’ve been working closely with retail customers to prove where the most value sits and it’s in decisioning, relevancy, and segmentation. KAI is just the start of many more innovative, unique features in our pipeline.”

Read more: Azerion Launches Generative AI Contextual Solution in Marketplace

Google Unveils New AI-Driven Creative and Ad Tools for Marketers

Google held its yearly Google Marketing Live event in New York, where it unveiled new creative tools and ad experiences powered by artificial intelligence (AI). Here are some things that marketers can anticipate seeing at Google soon.

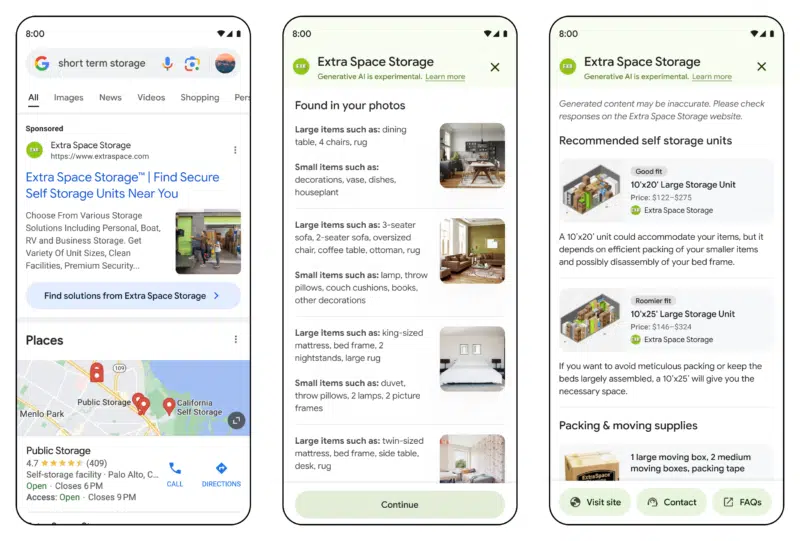

Search ad experience

One of the most creative updates to search is a new ad experience that is being tested and will roll out more broadly later this year. This interface assists users in making difficult purchasing decisions by providing them with supplementary data upon their voluntary consent. For example, the engine can suggest a storage option with the appropriate dimensions based on photos of the user’s belongings if the user is looking for a nearby storage facility.

This process is initiated by a search result that shows a sponsored ad that is clearly marked and has a blue button that directs the user to another page where they can upload images or other content and the shopper can receive recommendations.

Search Ads Experience. Image credit- MarTech

Read More: Flipkart Unveils Flipkart IRIS, its Insights Platform for Brands

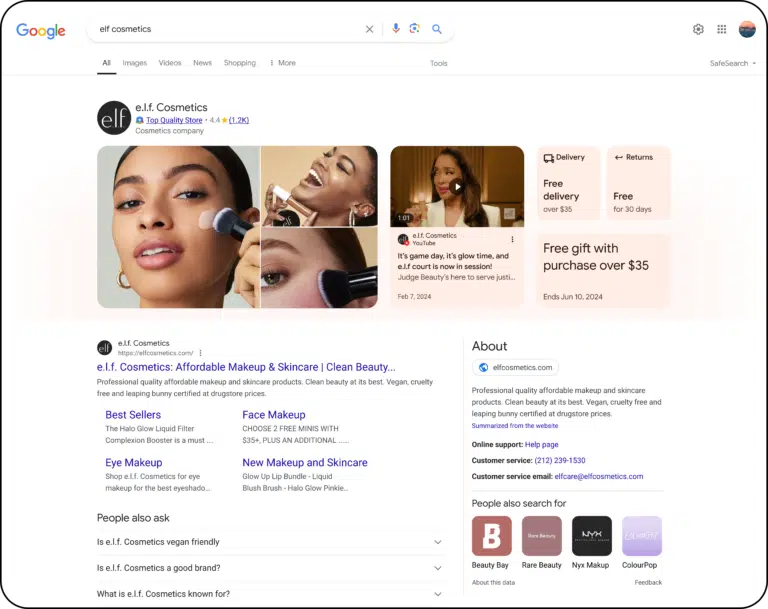

Brand profiles

New brand profiles are now searchable, according to Google. The data supplied by retailers in the Google Merchant Center is used to create the profiles. Google Maps’ Business Profiles and search results for users serve as inspiration for the profiles. The company claims that over 40% of search queries mentioning shopping mention a brand or retailer.

Brand Profiles. Image credit- MarTech

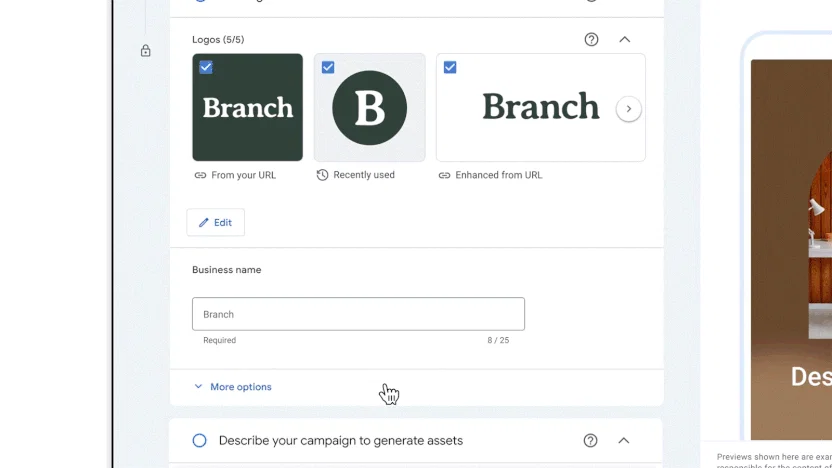

Creative Controls for Performance Max

Performance Max. Image credit- Afaqs

Advertisers will soon have the option to upload their brand guidelines—which include font, color schemes, and image reference points—to aid in the automatic creation of fresh, on-brand asset variations. In February, advanced Gemini AI models were released. You can use Performance Max campaigns on any Google ad inventory.

Read more: StackAdapt Partners with Samba TV to Power In-Platform Incremental Reach and Measurement

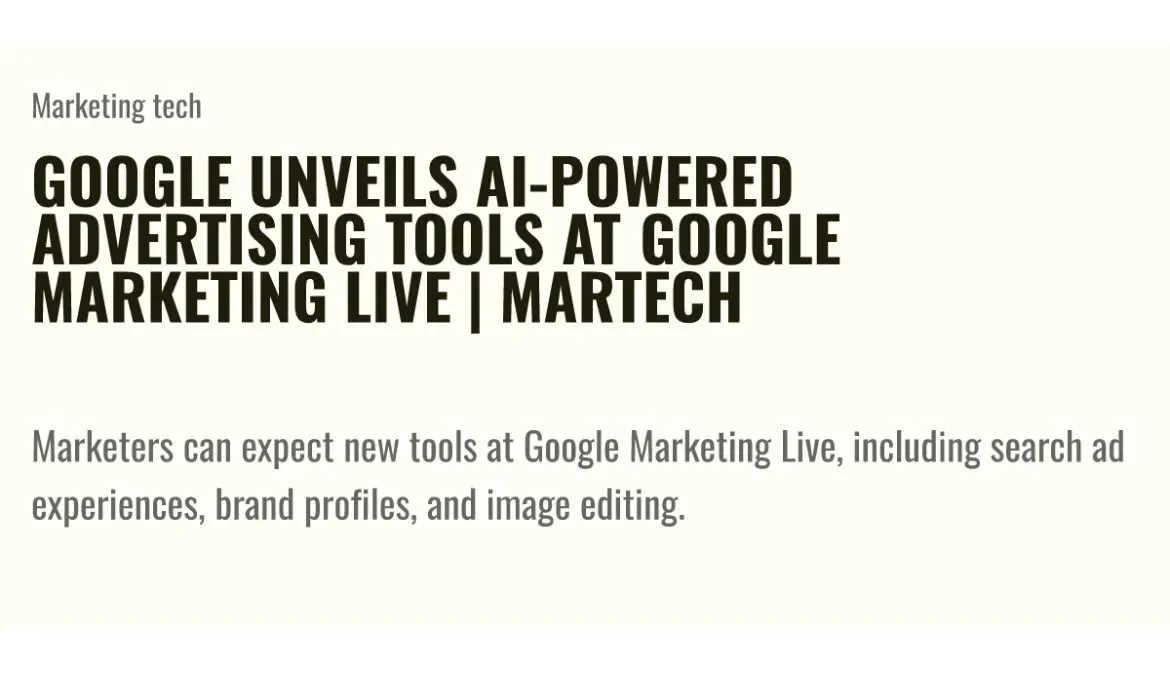

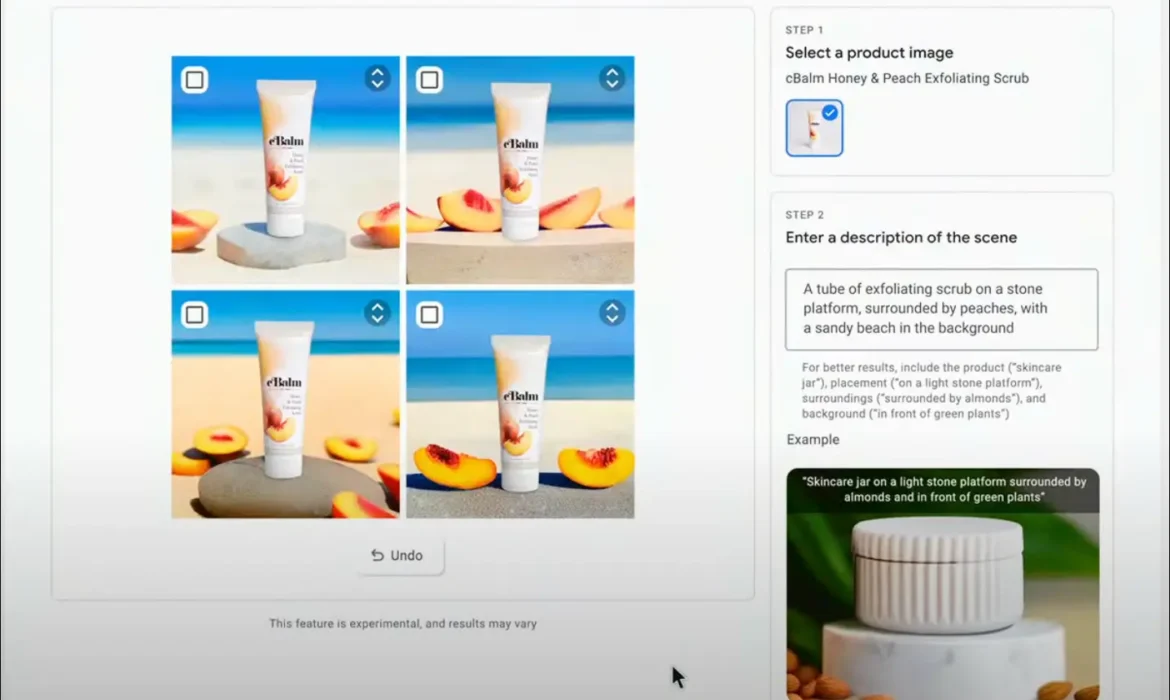

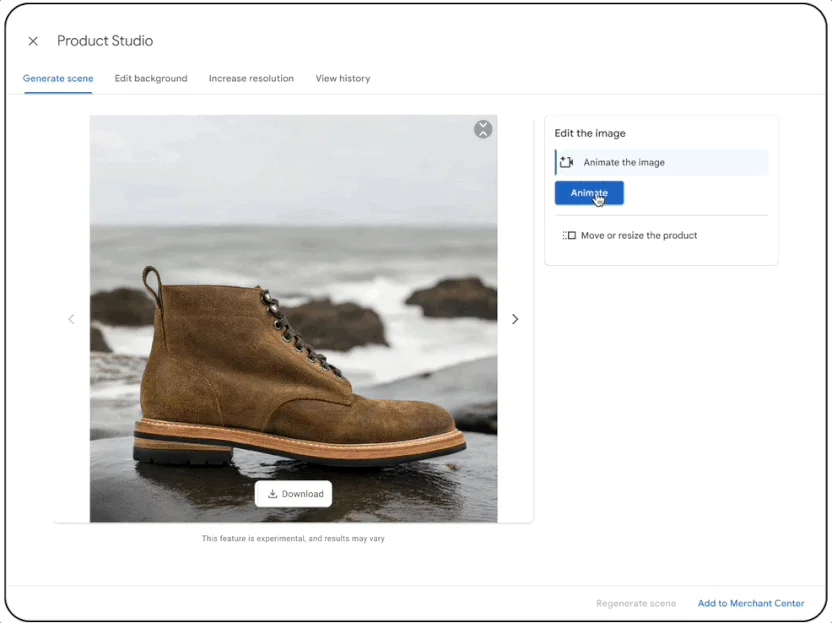

Product Studio

Google unveiled Product Studio in 2023 as a one-stop shop for merchants looking to create AI-powered content. Using Product Studio has increased their efficiency, or they anticipate being more efficient, according to 80% of merchants who use it. The capabilities of New Product Studio keep giving merchants access to the power of Google AI. Generated images must blend in with the current campaigns and content of brands to be beneficial. Brands can now create fresh product photos that reflect their distinct aesthetics.

Product Studio. Image credit- Afaqs

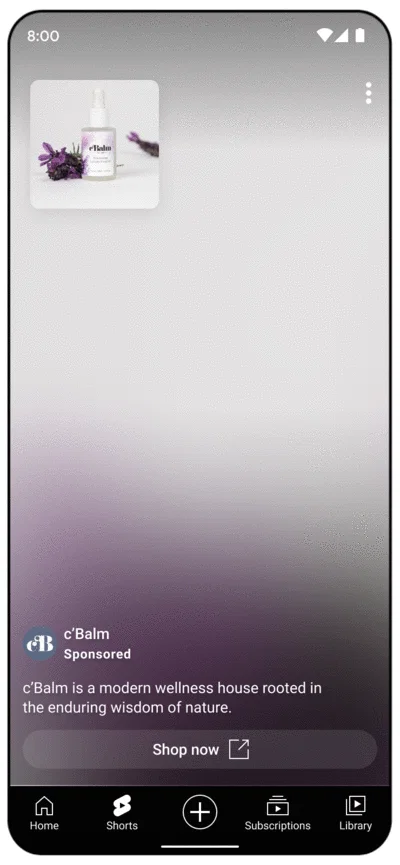

Immersive ad formats

Google also unveiled new ad formats to make brand advertisements more effective, using its generative AI. This year, a closed beta version will be made available to retailers so they can link their short-form product videos, or creator videos, to their advertisements. Shoppers on Search can interact with these brief videos that showcase how clothing would fit them, see helpful styling recommendations, and quickly explore brands’ related or complimentary products with just a click. Additionally, Google will display AI summaries beneath the video highlights so that consumers can view important information about a product before deciding to purchase it.

Image editing

New image editing tools will be available to retailers advertising through Google Merchant Center. With the aid of Google AI, they will be able to experiment, adding new objects to the advertisements and expanding the background to fit all sizes and formats. By adding striking backgrounds and other elements to these assets, these features hope to assist marketers in enhancing current creative in other ways or in adding new products to existing creative. The brand can use the new assets not only on Google channels but on any digital platform that supports images.

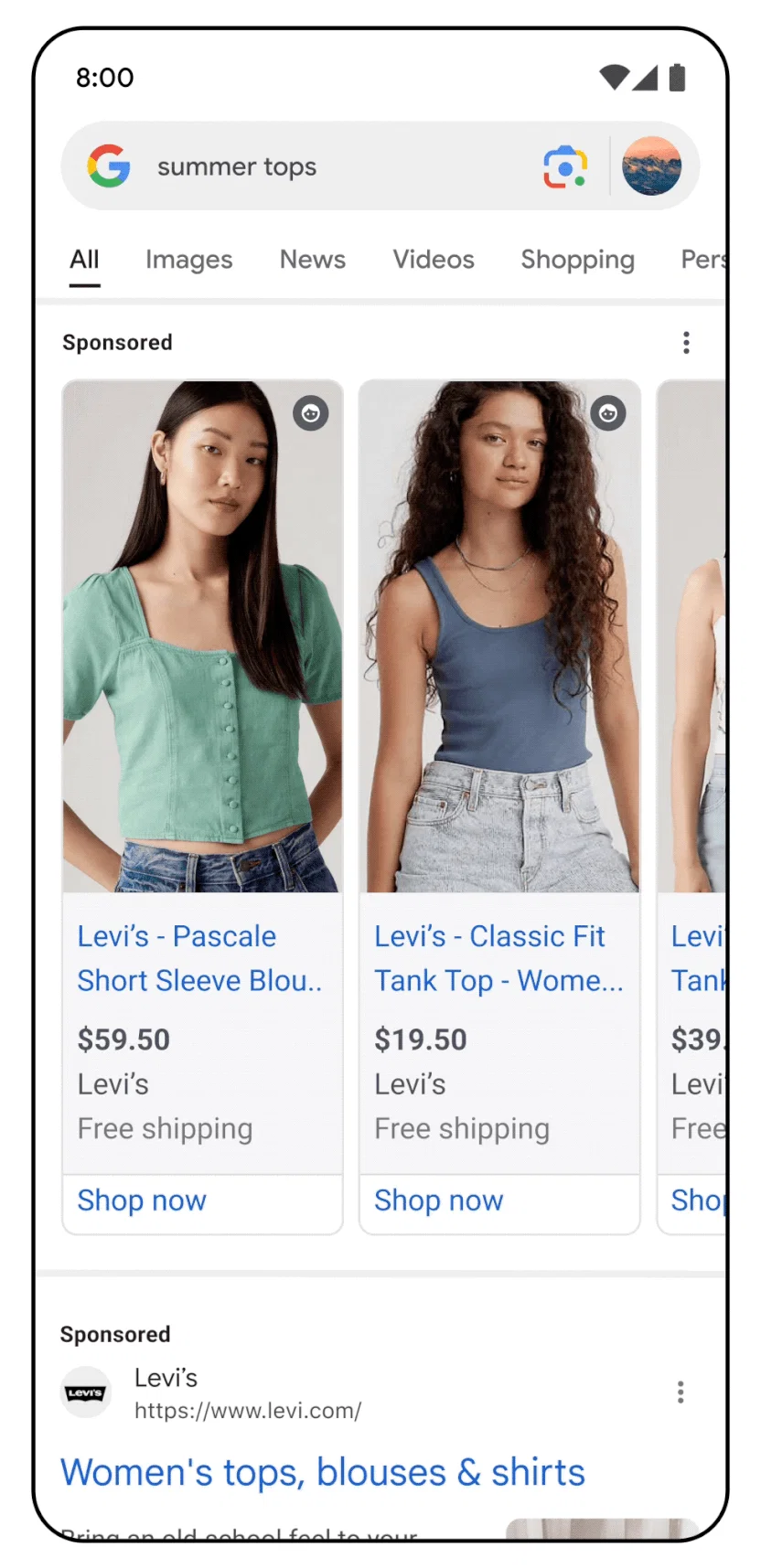

Shopping Ad experiences

Soon, shopping advertisements will offer brand-new immersive experiences. These include virtual try-ons and 3D spinning advertisements. For example, advertisements for shoes will allow the product to rotate 360 degrees. Customers of all body shapes will be able to virtually try on tops like shirts and sweaters thanks to virtual try-ons, which will benefit clothing brands. Additionally, advertisers will be able to incorporate product videos, summaries, and recommendations for related products within the ad format later in the year thanks to an even better user-driven experience. While some of these features were previously only available in organic search, they are now being added to advertisements.

Since introducing Virtual Try-On (VTO) technology to Search in 2023, it has observed a higher number of users clicking through to retailer websites when viewing products with VTO enabled. Additionally, brands have observed that images with VTO receive 60% more high-quality views than images without VTO.

Shopping Ad Experience – VTOs. Image credit- Afaqs

Ads in AI Overview

Google declared that it would start testing shopping and search ads in AI Overview. This month, Gemini-powered AI overviews for searches were revealed. The experiences are now being made available in the United States and will shortly be available in other nations as well. Google is providing solutions for at least two of the main ways that generative AI promises to transform our digital lives with these new tools and features.

Demand Gen. Image credit- Afaqs

Read More: iCubesWire Launches InfluenceZ, an influencer marketing platform for brands and creators

Why do these matter?

The gen AI answers in AI Overview will alter users’ search methods. They will be attempting to obtain the information they require by posing more in-depth queries and engaging in more reciprocal dialogue. Relevant ads that are marked as “sponsored” will appear when it is appropriate.

Second, by producing fresh ad iterations that adhere to the brand playbook, genAI will assist creatives in scaling content. Additionally, to enhance the effectiveness of advertising campaigns, those assets will be displayed at the consumer-friendly touchpoint. Additionally, GenAI will simplify measurement so that marketers can ask Google Analytics questions in natural language and get the answers they need to enhance their campaigns.

Read More: DDB Melbourne Promotes James Cowie and Giles Watson

Snapchat Launches New AR and ML Tools for Advertisers

Snapchat has launched new augmented reality (AR) and machine learning (ML) tools to help businesses and advertisers promote their brands on the platform more effectively. The company has been investing in automation and machine learning to speed up and simplify the creation of AR assets. The platform unveiled AR Extensions at the US-based event, IAB NewsFront. Advertisers can now directly incorporate AR lenses and filters into any of Snap’s ad formats, such as Collection Ads, Spotlights, Dynamic Product Ads, and Snap Ads.

Snapchat’s New AR and ML tool for brand growth

Another feature coming to Snapchat is ML Face Effects, which allows brands to use Generative AI technology to create customized Lenses for branded AR ads. With this new feature, brands can quickly create a unique machine-learning model and enhance a Lens with lifelike face effects. With the help of AR ads, advertisers will be able to directly promote their goods, intellectual property, and brands to Snapchat’s 422 million daily active users. New automation and machine learning tools have been released in the AI space to facilitate the quicker and simpler creation of augmented reality.

Read More: Snapchat and Fospha Partner for Enhanced Snapchat Campaigns Insights

Snapchat hopes to help brands transform 2D product catalogs into try-on experiences by cutting down on the time required to create AR try-on assets at scale with this new tool. Additionally, brands can now use generative AI technology, which enables customer-produced lenses, to create branded AR ads on Snapchat. With the help of this new feature, users can quickly generate unique machine-learning models that produce realistic effects and branded selfie experiences for “Snapchatters.”

Snapchat’s ongoing affiliations for community building

Additionally, the social media network changed its relationship with Live Nation in an effort to better utilize Snapchat’s network of influencers, or “Snap Stars.” Through the Snap Nation Public Profile, Snap Nation will provide Snapchatters with exclusive behind-the-scenes content from well-known musicians. To compile shared experiences from the platform community in one location, Snapchat will also be curating stories from Live Nation concerts and festivals that feature public Spotlights from Snapchatters, Snap Stars, music fans, and artists.

Currently, advertisers can only take advantage of this feature in the United States. There, they can participate through branded content on creator stories, ads in Snap Nation stories, and AR Lenses that use Live Nation IP.

Read More: Swiggy’s Heartwarming Mother’s Day Film Strikes a Chord with Siblings Everywhere

ProjectX Partners With mFilterIt To Boost Customer Experience

ProjectX, a brand-tech business owned by Abdul Latif Jameel that focuses on empowering brands to use technology to propel growth and improve outcomes, has announced a strategic partnership with mFilterIt, a provider of solutions for brand safety and ad fraud prevention. Through this partnership, ProjectX will take advantage of mFilterIt’s technological prowess to develop all-encompassing ad tech solutions that protect the digital environment for its brands. Ad traffic validation, global e-commerce intelligence, Direct Carrier Billing (DCB), Value-Added Services (VAS), brand protection suite, and anti-fraud solutions are a few examples of this.

More innovation, and more openness in digital

Furthermore, ProjectX will aggressively market mFilterIt’s cutting-edge toolkit and services to MENA brands that it collaborates with. This partnership will promote more innovation, trust, and openness in the digital environment for a range of industries. By leveraging its patented AI/ML-based technology to foster customer trust and transparency, mFilterIt empowers brands across industry domains, Telcos, and VAS aggregators while focusing on safeguarding the digital integrity of enterprises across platforms. Over 600 clients in 75 countries are served by the organization and its parent group.

Read More: Leo Burnett Mumbai Announces New Appointments To Its Leadership Team

Here’s what they said

Ramzi Dargham, CEO of ProjectX said,

“ProjectX combines the right methodology and technology needed to build and transform brands for future success. We provide tailored advisory services, including growth-focused strategies and tech stack recommendations based on a data-first approach. Through our collaboration with mFilterIt, we look forward to creating a transparent digital ecosystem for the MENA region’s clients.”

Amit Relan, CEO of mFilterIt, added,

“As we witness the ever-evolving landscape of the digital world, it becomes imperative to fortify our defenses against potential threats. Our agreed representation by ProjectX represents a significant step towards creating a more secure and transparent digital ecosystem. Together, we aim to empower businesses and protect their online assets, fostering a climate of trust and reliability.”

Read More: Goafest’24 To Be Hosted from 29-31 May at Westin Powai, Mumbai

Jio Platforms Launches Jio Brain, an AI and ML Platform for Enterprises

Leading telecom and tech company Jio Platforms recently unveiled Jio Brain. It is an artificial intelligence (AI) and machine learning (ML) platform for businesses. Jio Brain seeks to simplify the deployment of ML tools across telecom networks, enterprise environments, and industry-specific IT setups in everyday operations. It seeks to emphasize integrating 5G capabilities. Jio Brain’s enterprise-ready and mobile-ready large-language model (LLM) as a service feature. This enables its clients to take advantage of generative AI features, which is one of its main selling points. With over 500 data APIs and Representational State Transfer (REST) APIs, the cloud-native platform enables businesses to develop ML services tailored to their specific requirements.

Industry’s first 5G integrated ML platform

Aayush Bhatnagar, Senior Vice President of Jio, made the announcement. According to him, hundreds of engineers worked on the project for two years before Jio Brain was developed. The platform was described as “Industry’s first 5G integrated ML platform” in a document that was included with the announcement.

According to Bhatnagar, the platform was created following two years of research and development work by hundreds of engineers. He highlighted Jio Brain’s transformative potential. He said it will help develop new 5G services, transform businesses, and optimize networks. Furthermore, it will pave the way for the development of 6G, where machine learning will play a major role. With this unveiling, Jio is demonstrating its dedication to driving the enterprise application of AI and ML.

Read More: Nazara Tech’s Nodwin Gaming Acquires Comic Con India for Rs 55 Cr

Jio Brain

The platform is essentially an AI and ML-powered system. It provides enterprises with automation and possibly even generative AI features. It helps them run their operations more quickly and effectively. The service describes itself as “industry agnostic” and states that it provides many features. LLM as a Service, sophisticated AI features for text, images, videos, documents, and voice, a cloud-native solution with plug-and-play architecture, data integration capabilities, numerous AI/ML embedded mobile and web applications, and more are just a few of Jio Brain’s noteworthy products.

Jio Brain’s AI and ML services

Businesses can use Jio Brain to handle tasks related to natural language processing (NLP) and artificial intelligence (AI). These includes text-to-music, text-to-image and video, speech-to-speech, speech-to-text, and image-to-video generation. Jio Brain exhibits adaptability to managing a diverse range of applications. Its extensive feature set makes it an effective tool for businesses. It tries to improve productivity and creativity throughout its whole operation. The platform makes code generation, debugging, explanation, and optimization easier. Jio Brain also includes essential machine learning features like feature engineering, ML chaining, hyperparameter tuning, and more that can be used as a stand-alone service or in combination.

Crucially, Jio Platforms stated that it would be willing to work with researchers in AI and ML to make improvements. Jio’s dedication to collaborative innovation demonstrates its understanding of the dynamic nature of AI. Moreover, it also showcases the need to constantly push boundaries. Jio Brain seeks to remain at the forefront of technological developments in the AI and ML space by establishing partnerships with researchers. Although the announcement offers an overview of Jio Brain’s capabilities, the company has not provided a launch date for the platform. This gives the tech community cause for excitement and conjecture as businesses and industry insiders alike eagerly anticipate Jio Brain’s official launch and the revolutionary effects it may have on the market for business AI and ML solutions.

Read More: Publicis Groupe Plans €300 million AI Investment, Unveils CoreAI Platform

Reliance’s Recent Move Toward AI

Reliance recently revealed a collaboration to develop a sizable language model suited to India’s multifaceted linguistic environment. This initiative, in partnership with NVIDIA, aims to advance AI technologies for a range of applications, including drug discovery, chatbots, and climate research. In this project, NVIDIA will provide AI supercomputer technologies to Reliance Jio, the Reliance Group’s telecom subsidiary. These include networking solutions, graphics processing units (GPUs), CPUs, AI operating systems, and frameworks that are necessary for creating sophisticated AI models.

Reliance’s Latest Endeavors

Jio’s chair, Akash Ambani, recently revealed that the company is collaborating with IIT Bombay on the BharatGPT project. This comes also after it was revealed that Reliance and NVIDIA are collaborating to use GH200 GPUs in India to create AI models. Jio is developing its operating system (OS), especially for televisions in addition to these initiatives. By improving user interaction and engagement on Jio devices, this operating system seeks to bolster the company’s services throughout its ecosystem.

In conclusion, the introduction of Jio Brain by Jio Platform marks a major advancement in the application of ML and AI technologies in business settings. Jio Brain can completely change how businesses approach efficiency, innovation, and automation. This is because of its emphasis on 5G integration, customization, and cooperation with researchers. The excitement surrounding Jio Brain is growing as the tech community waits for more information and a confirmed launch date. This is a significant development in the changing landscape of AI and ML platforms for businesses.

Read More: Babita Baruah Joins VML India as CEO as Shams Jasani Steps Down

Google Cloud Introduces New Generative AI Tools for Retailers

On the eve of the annual convention of the National Retail Federation, Google unveiled several new generative AI tools intended to improve the online shopping experiences and other retail operations of retailers. The tools make use of artificial intelligence technologies that are generative. They are made to make the implementation of chatbots and AI easier, enhance search, and produce more customized shopping experiences. One of these new products is an AI-powered chatbot that merchants can incorporate into their mobile apps or websites.

The most recent illustration of generative AI’s expanding impact in the retail sector is found in Google Cloud’s products. Retailers can modernize operations, personalize online shopping, and change in-store technology rollouts with the aid of these generative AI-powered technologies. These virtual representatives can converse with clients and make recommendations for products based on their likes.

Google Cloud unveils new generative AI tools for retailers

In addition, tools for improving retailers’ customer support systems and streamlining their product cataloging procedures are included in Google’s recently released AI products. In addition to e-commerce, physical stores are receiving new artificial intelligence capabilities via Google Distributed Cloud Edge, an already available hardware and software package.

All these areas seem to have a great deal of room for improvement. After using virtual assistants, only about one-third of customers are happy with them, and almost 20% say they wouldn’t use them again. Customers are still very interested in using AI, though. Eighty percent of those who haven’t used the technology for shopping would like to give it a try. The majority, or five9%, would like to use AI applications while they shop.

Read More: Imagine Communications Join Forces with Google Ad Manager

New generative AI tools

Google’s offerings are aimed squarely at these issues. The new tools include:

Conversational Commerce

Similar to brand-specific ChatGPT, Conversational Commerce facilitates the joining of chatbots on websites and mobile apps. The salespeople converse with customers in plain language and make customized product recommendations depending on each person’s preferences. When it comes to products, they can have “helpful and nuanced” conversations with customers. Moreover, it offers recommendations based on their preferences.

Catalog and content Enrichment toolset

Google Cloud’s new Catalog and Content Enrichment toolset, which uses GenAI models—including the previously mentioned PaLM and Imagen—to automatically generate product descriptions, metadata, categorization suggestions, and more from as little as a single product photo, complements the Conversational Commerce Solution. Additionally, retailers can use the toolset to create new product images from pre-existing ones. Furthermore, it can also leverage product descriptions as the foundation for AI-generated product images.

For example, when a customer is looking for a formal dress for a wedding, a virtual agent can talk to them and offer customized product options based on their preferences for colors, the type of venue, the weather, complementary accessories, and price range. Importantly, rather than taking months, retailers can use these advanced conversation AI agents in a matter of weeks. This new solution can be integrated into a retailer’s current catalog management software or run on the Vertex AI platform on Google Cloud.

Read More: Google’s Third-Party Cookies Deprecation Rolls Out Today

Vertex AI

Additionally, Vertex AI Search for retail, a product from Google Cloud, has a new LLM capability. It provides retailers with natively embedded Google-quality search, browse, and recommendation capabilities on their unique product catalog and shopper search patterns. With the addition of new large language model (LLM) capabilities, Vertex AI Search enables sellers to tailor an LLM to their specific catalog and the search habits of their customers. By better ranking possible products as a fit for any given search term, it can give users more relevant search results.

Customer Service Modernization

Customer Service Modernization combines chatbots with an existing retailer’s CRM data. It enhances self-service, recommends products, sets up appointments, monitors order status, and more.

Google Distributed Cloud Edge

To lower IT expenses and resource commitments related to retail GenAI, Google unveiled the Google Distributed Cloud Edge. It is a managed self-contained hardware kit designed specifically for retailers. It is intended to facilitate retailers’ use of AI in places with spotty or nonexistent internet. Store analytics, frictionless checkout, and streamlined mission-critical store operations are currently its main use cases. The edge cluster, which powers customers’ GenAI apps, is said by Google to be compatible with a variety of retail spaces, including convenience stores, gas stations, fast-casual restaurants, and grocery stores. It comes in a range of sizes, from single-server to multi-server configurations.

Here’s what they said

Carrie Tharp, vice president of Strategic Industries, Google Cloud said,

In only a year, generative AI has morphed from a barely recognized concept to one of the fastest-moving capabilities in all of technology and a critical part of many retailers’ agendas. With the ability to accelerate growth, boost efficiency, fuel innovation, and reduce toil, generative AI solutions are ready to be deployed now, and Google Cloud’s recent innovations can help retailers recognize value in 2024.

Read More: Google Launches Google Ads Data Manager for First-Party Data

Yahoo Spins Out its Enterprise Engine Vespa for AI-Scaling Excellence

Yahoo announced the spin-out of Vespa, its enterprise AI-scaling engine to increase the reach of its technology platform. Vespa, which makes extensive use of data and AI online, will be a distinct and independent company. Since 2017, Vespa, a division of Yahoo, has served a range of external clients’ AI requirements, including Spotify, Wix, major financial institutions, and others. Searching through millions of documents inside a large enterprise, providing superior data-driven online services, or enabling scalable AI-based language apps are a few examples. 150 essential applications that are crucial to the business’s operations are supported by Vespa technology. These programs are necessary for real-time content personalization across all of Yahoo’s pages and for managing targeted advertising inside one of the world’s biggest advert exchanges.

What is Vespa?

In 1997, Vespa debuted as a cutting-edge search engine. Later, as a result of the Overture acquisition in 2003, it joined Yahoo. Vespa went open-source in 2017 and started providing services to outside customers in 2021. Through the spin-out, Yahoo will have a share in the new company and a director seat on its board.

Vespa – Yahoo’s spin-out

The spin-out intends to increase Vespa’s technology platform’s usability at a time when retrieval-augmented generation (RAG) requires the company’s artificial intelligence (AI) processing. With around 150 technology apps supporting close to one billion users, Yahoo will continue to be Vespa’s top customer and strategic investor. These programs process 800,000 inquiries every second, which is astounding. Vespa will also keep powering Yahoo’s internal solutions for search, dynamic recommendations, and providing users with targeted adverts and content.

Read More: Microsoft Advertising Enhances Search and Ads with Generative AI

Benefits of entity spin-out

Vespa will be able to benefit the rest of the globe greatly if a corporation is built around it. Additionally, it will enable businesses already reliant on Vespa to take advantage of cloud service efficiencies. More businesses will use it to solve online AI and big data concerns. Vespa will be able to quicken the creation of new features, enabling its users to produce even better solutions more quickly and inexpensively. Deploying on a cloud service or keeping with an open-source distribution are both possible options.

Thanks to its decade-long work on fusing AI and large data online, Vespa delivers features and scale that exceed any comparable solution. The demand for a platform that offers a solid basis for these solutions has never been greater as the world begins to use current AI to solve actual business problems online.

Here’s what they said

Jim Lanzone, CEO of Yahoo said,

Vespa has been a critical component to Yahoo’s AI and machine-learning capabilities across all of our properties for many years. While remaining Vespa’s biggest customer and a key investor, we’ll continue to leverage all that Vespa has to offer while simultaneously creating a new business opportunity that allows other companies to harness its technology as an independent entity.

Jon Bratseth, CEO of Vespa added,

Given Yahoo’s incubation and advancement of the technology over the years, now is the time to spin out Vespa and allow other companies to take advantage of Vespa Cloud in a meaningful way. With Yahoo’s continued investment and support, Vespa will be able to maintain its position as one of the largest and most sophisticated machine learning and database management platforms globally.

Read More: The AI Search War: Microsoft & Google Compete for Search Engine Leadership

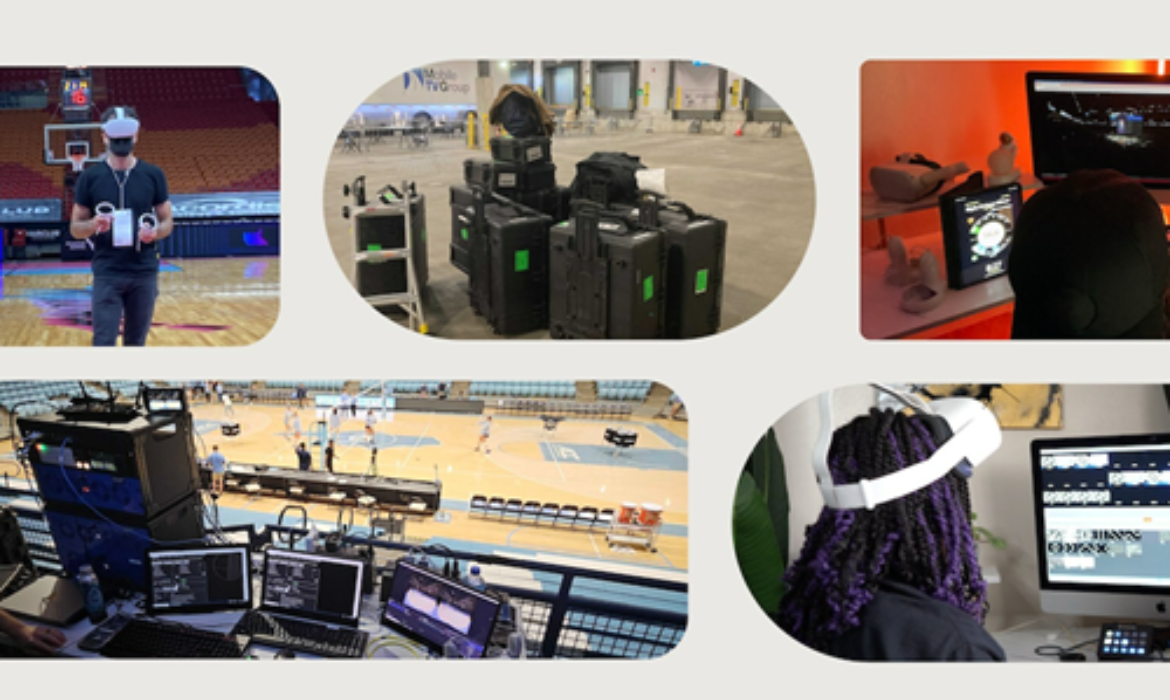

Media.Monks Unveils AI Integration for Customized Content

S4 Capital’s solely digital operational brand, Media.Monks is introducing an AI product. It will integrate generative AI and machine learning into its software-powered production system. Additionally, it will support the delivery of highly customized content to customers in new media types. At the 2023 International Broadcasting Convention (IBC), NVIDIA, Adobe, and Amazon Web Services will present additional information on Media.Monks. The company uses strong software platforms, powerful GPUs, and cutting-edge networking technology to choose highlights from live transmission. Additionally, it will deliver customized information to certain online audiences. Brands and customers are expected to receive more personalized experiences, thanks to the partnership with NVIDIA.

AI Integation in Software-powered production system

MediaMonks’ software-driven production system is built to function remotely with a staff that is dispersed across. The method offers versatility by doing away with the requirement for broadcast appliances that are only used once. It also provides full-service consulting, production, and integration services. The solution captures live content on the spot and sends it to remote teams. Developed locally, through Amazon Web Services, it integrates edge ecosystem standards. It can easily adjust to the needs of delivering multi-format content across a range of new media formats, devices, and platforms. This method of live broadcasting reduces greenhouse gas emissions significantly.

- A geographically dispersed workforce and cloud redundancy further improve reliability. Additionally, it eliminates the requirement for single-use broadcast appliances while cutting expenses by an estimated 50% or more when compared to standard broadcast settings. The live broadcast production workflow that won the Excellence in Sustainability Award considerably cuts greenhouse gas emissions.

- Software-defined production, which is now available as a production, consulting, and integration service, lowers costs from conventional broadcast set-ups by an estimated 50% or more while increasing reliability through cloud redundancy and dispersed global staffing.

- The system can be effectively installed in the cloud on Amazon Web Services (AWS) in a conventional or edge ecosystem, or it can be deployed locally to let a quick team capture information locally for dissemination to remote teams.

Read More: LightBoxTV Extends Range with Total TV Solution for CTV Campaigns

Dedication to sustainability

Additionally, Media.Monks highlight its dedication to sustainability by pitching its remote service as a substitute for conventional broadcasting infrastructure. The concept however will need significant investment in transportation and physical infrastructure. At the 2023 NAB Show, the company received a trophy for excellence in sustainability awareness for reducing greenhouse gas emissions related to live production and broadcast operations.

Finally, Media.Monks’ incorporation of generative AI into their software-powered production system illustrates its commitment to providing clients with customized content while lowering costs and having a smaller environmental effect. By utilizing cutting-edge technologies, Media.Monks seek to remain on the cutting edge of the changing broadcast industry and offer a tailored and engaging experience for both advertisers and customers.

Here’s what they said

Lewis Smithingham, SVP of Innovation at Media.Monks, said

Our goal is to deliver a more personalised experience for consumers and brands as efficiently as possible. Fans are increasingly craving personalised content they can watch on non-linear channels, so we’re using the latest GPUs, networking technologies and software platforms from NVIDIA and AWS to build upon our next-generation broadcasting solution and deliver the content people most want to watch.

Bob Pette, Vice President and General Manager of Professional Visualisation at NVIDIA, added

The future of broadcast is AI-powered and software-defined. Our collaboration with Media.Monks will help deliver a more engaging and personalized experience for brands and consumers.

Read More: Criteo’s Commerce Max DSP Unites Retail Media with General Availability

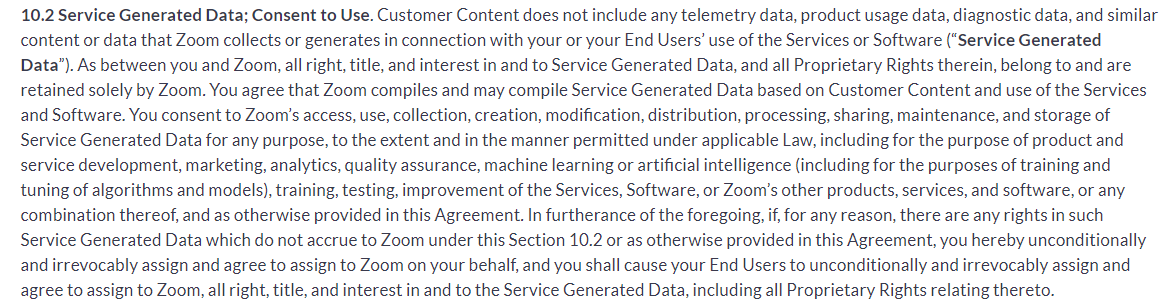

Zoom Faces Backlash As Revised AI Policy Raise Privacy Issues

Zoom, the video conferencing company that emerged in 2020 during the Covid epidemic, is receiving harsh criticism for its revised terms and practices. Following two changes to its AI approach, the corporation has announced that it will develop AI-powered tools using client information without user approval. Zoom claims complete authority over data collected during Zoom calls under the amended AI policy terms. Furthermore, they can reuse and share this data in any way they wish while adhering to the law to develop their artificial intelligence/machine learning frameworks. By doing so, they have failed to give users the choice to opt out.

As part of our commitment to transparency and user control, we are providing clarity on our approach to two essential aspects of our services: Zoom’s AI features and customer content sharing for product improvement purposes. Our goal is to enable Zoom account owners and…

— Zoom (@Zoom) August 7, 2023

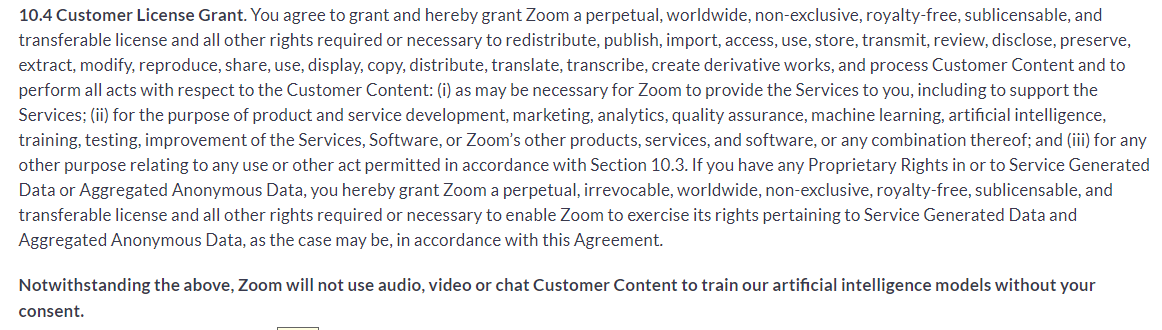

Updated TOS with AI policy

Zoom revised its service terms in July. These policy revisions follow a public uproar over ethical implications of developing AI models with consumer data. According to the TOS, Zoom may collect and use “service-generated data” on product usage, telemetry, and inspections to train AI models. Customers agree to allow Zoom to access, utilize, gather, create, change, distribute, process, manage, and keep service-related data for any reason, including ML or AI training and algorithm tuning, without attribution. The TOS statement, on the other hand, fails to explain or clarify what Zoom means by service-generated data.

Image credit- Zoom

Read More: The AI Search War: Microsoft & Google Compete for Search Engine Leadership

Furthermore, according to revised policies, Zoom has obtained a long-term and global license that allows them to freely share, issue, access, utilize, stock, pass on, evaluate disclose, protect, extract, change, replicate, share, exhibit, distribute, translate, record, generate fresh versions, and manage customer content. A non-exclusive and royalty-free license allows sublicenses and transfers.

Image credit- Zoom

Zoom Responds

People express concerns about openness and privacy laws. Many people are concerned that Zoom’s updated restrictions may have significant ramifications in the telehealth and educational industries. These industries are governed by privacy laws. They also believe that it is critical to address AI model training issues using data gathered from recorded sessions.

Meanwhile, it denies using user information for AI training without first obtaining authorization. According to a blog post by Zoom, clause 10.2 is merely to enhance openness around data usage, to improve customer experience. This would entail studying usage trends such as peak hours in specific time zones to optimize the data center. This would improve video quality. Although Zoom has stated that it will not use audio, video, or chat content for training its models in the healthcare and educational sectors without client authorization, its terms of service appear inconsistent.

Smita Hashim, Chief Product Officer for Zoom writes,

An example of a machine learning service for which we need license and usage rights is our automated scanning of webinar invites/reminders to make sure that we aren’t unwittingly being used to spam or defraud participants. The customer owns the underlying webinar invite, and we are licensed to provide the service on top of that content. For AI, we do not use audio, video, or chat content for training our models without customer consent.

Read More: Battle of the Ads: Borzo Reveals Who Wins – Advertising Team or AI?

Zoom’s AI-powered assistant Zoom IQ contradicts TOS

A few months ago, Zoom introduced Zoom IQ, an AI-based assistant. This function, which summarizes conversation threads and produces automated answers to chat queries, is optional. However, Zoom IQ is automatically enabled. Users who do not change their settings give businesses permission to collect their data.

The other participants in the call receive a notice stating “Meeting Summary has been enabled” when a Zoom meeting starts with Zoom IQ enabled. Inputs and AI-generated content can be accessed from all users by the account holder. The company ties this to model training and product improvement. The participants have two options: accept the meeting or quit it. It is impossible to avoid sharing private information. In other words, someone else can give Zoom permission to use your data to create AI on your behalf.

A look at the future

Zoom, whose popularity surged during the Covid-19 outbreak, has received criticism for its privacy practices in the past. The business was hit with a $85 million lawsuit the year before due to a security flaw that let hackers access unidentified virtual meeting rooms. Making adjustments to its policies to remain relevant is unavoidable given the constantly changing AI landscape. In addition to OpenAI’s ChatGPT and Google’s BARD chatbot, other AI platforms have raised similar concerns. The results of Zoom’s recent changes to its terms and rules are uncertain. Will it further damage its well-established reputation or turn out well?

Read More: FTC Issues Notice to OpenAI over ChatGPT’s Privacy Data Breach

A Look Ahead: Convergence Of Linear TV And Digital TV Advertising

Digital and Linear TV advertising – two culturally and technologically distinct ecosystems – are merging to increase the effectiveness of marketing to consumers.

The shift from traditional linear television to connected television (CTV) and video-on-demand options has made it difficult for advertisers to reach mass audiences. Intriguingly, some of the same techniques used for converting television viewers to digital audiences can be applied to converting addressable digital inventory into non-addressable inventory.

Interesting Read: Connected TV Explained: The Essential Glossary Of CTV

Machine Learning and Data Automation

Advertising technology(adtech) companies are one of the first to adopt big data, machine learning, and artificial intelligence. One of the reasons is that websites and apps generate enormous data that advertisers can utilize to achieve their quantifiable goals. To leverage this, automation is the key aspect in performance advertising. It is the most feasible economically logical step for marketers. Thus, small and big ad tech companies have embraced machine learning on a large scale successfully to attract customers and increase ROI.

On the other hand, TV advertising continues using conventional methods and relies on billboard data to understand the advertising reach. Even though the data is modeled but it is not as responsive as the techniques used in digital advertising.

As machine learning is not compatible with small and medium-sized data sets, advertisers are using moving to other innovative methods for TV ads. Data-Driven Linear Purchasing(DDL) is gaining momentum as it combines the precision of digital and the reach of TV. It leverages automation and data to target audiences on national linear TV. The method may not be at par with machine learning but optimizing DDL campaigns require accurate prediction and efficient complex procedures.

Interesting Read: AdTech Vs MarTech: Let’s Settle This Once For All!

Performance Measurement

The performance measurement is similar between the two media. In contrast to digital advertising, where convergent events are used to train sophisticated and supervised learning models, TV advertising is more difficult to measure. Nevertheless, Savvy TV buyers have various other ways to improve the buy like web and app analytics, group surveys, and more.

Despite TV advertising embracing the data value, digital advertising has been heavily criticized for using excessive amounts of data. As a result, government and industry privacy regulations have eliminated many of the drivers of machine learning techniques including the individual-level identifiers used during transactional events and advertising exposures.

The Road Ahead For Advertising

What is in store for advertisers after the privacy regulations?

Advertising technology (AdTech) companies can develop technology to learn from data samples, analyze that data based on context, and apply those findings to power buyers and sellers.

There is still enormous digital data despite changes in privacy policies. Advertisers should concentrate on targeting based on context and so machine learning is likely to be part of any solution. However, in television advertising, data scientists will have to prudently balance the statistical outputs of the models. Identifying and targeting audiences will remain a challenge for the advertising industry. In the meantime, data scientists who meld the best technologies from digital and television advertising can deploy powerful and accurate targeting in a more privacy-conscious world.

Interesting Read: The Ultimate A-Z Glossary Of Digital Advertising!