Published on: April 29, 2024

Microsoft has released a new family of Small Language Models (SLMs) as part of its strategy to make lightweight yet high-performing generative AI technology available on more platforms, including mobile devices. Microsoft intends to release a family of SLMs over the next few weeks, the first of which is Phi-3-Mini. There are plans to develop Phi-3-Medium and Phi-3-Small. Small language models are said to be significantly more affordable for users and are trained on much smaller datasets than LLMs like Google’s Gemini and OpenAI’s ChatGPT.

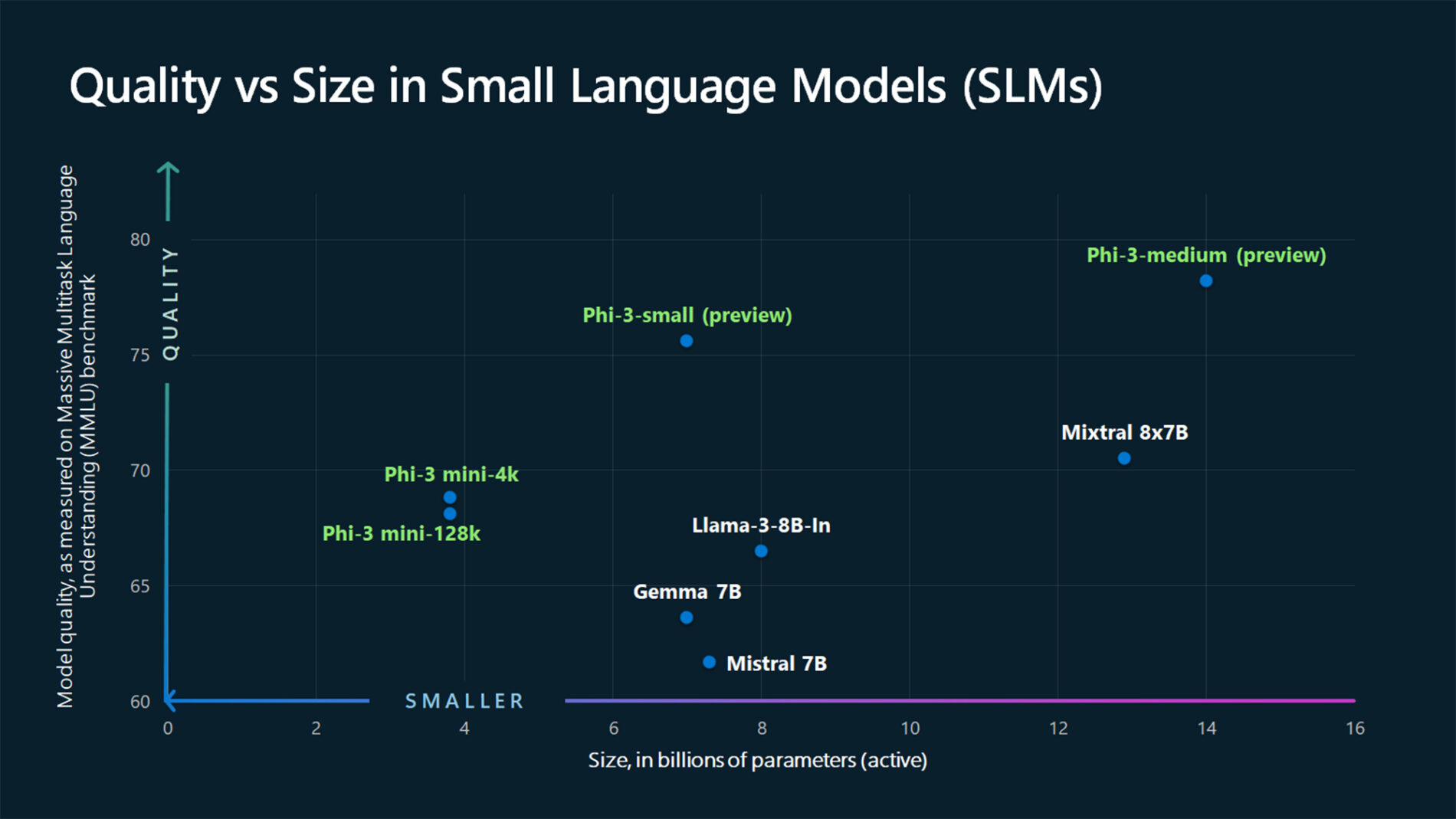

For starters, this language model’s small size might allow it to operate locally, like an app on a smartphone. ChatGPT, on the other hand, is much larger and requires an internet connection to operate. A comparatively smaller dataset of 3.3 trillion tokens—numerical representations of human language—was used to train the Phi-3-Mini. One of the three compact models that Microsoft is rumored to be releasing is the Phi-3-Mini. It is said to have surpassed models of the same size and the next size in a number of benchmarks, including arithmetic, language, reasoning, and coding.

Read More: Vodafone and Microsoft Sign 10-year Strategic Partnership for IoT, Cloud, AI and More

Similar to the more well-known Large Language Models (LLMs), Small Language Models (SLMS) are a subset of Artificial Intelligence models. Although billions of datasets are used to train both, SLMS require fewer parameters. SLMs can operate offline and are not cloud-connected like LLMs. Because of this, it responds more quickly than LLMs. SLMs, on the other hand, are better suited to simple tasks than LLMs, which have more sophisticated computational capabilities. Additionally, SLMs are easily adaptable to meet particular requirements. SLMs are therefore well suited to operating on devices with less processing power. They are also far more economical than LLMs, according to them.

SLMs have benefits, but as Microsoft noted in its technical report, they also have disadvantages. The researchers pointed out that “challenges around factual inaccuracies (or hallucinations), reproduction or amplification of biases, inappropriate content generation, and safety issues” still plague Phi-3, just like they do most language models.

With the release of its most recent model, Microsoft gives users access to an increased range of superior language models, providing them with more useful options to choose from when developing generative AI applications. HuggingFace, Ollama, Microsoft Azure AI Studio, and other AI development platforms offer the 3.8B language model Phi-3-mini.

Read More: Microsoft Advertising Announces Global Rollout of Performance Max Campaign

The context window, which is expressed in terms of tokens, is the volume of discourse that an AI is capable of reading and writing at any given moment. Microsoft claims that Phi-3-mini comes in two flavors: a 4K context-length variant and a 128K token variant. Models are better able to process and reason over large text content, including documents, web pages, code, and more, when their context windows are longer.

With minimal effects on quality, Phi-3-mini is the first model in its class to support a context window of up to 128K tokens. The model has been trained to comply with various user-given instructions, a process known as instruction tuning. The model is thus “ready to use out-of-the-box,” according to this as well.

Microsoft claims that more people will be able to use AI in ways that weren’t possible with LLMs because models like Phi 3 can operate offline. Microsoft gave the example of a farmer examining crops and noticing symptoms of illness on a leaf or branch. The farmer could snap a photo of the crop in question with an SLM equipped with visual capabilities, and within seconds, receive treatment recommendations for pests or diseases.

Read More: Microsoft Announces Substantial $1.5B In G42, Abu-Dhabi’s AI Tech Holding Firm

The Phi-3-mini is an SLM. To put it simply, SLMs are larger language models that have been simplified. Smaller AI models are more affordable to create and run than LLMs, and they function better on smaller screens, such as laptops and smartphones.

SLMs are distinct due to their specialization, whereas LLMs are trained on vast amounts of generic data. SLMs can be tailored for particular tasks and made to perform them accurately and efficiently by fine-tuning. Compared to LLMs, most SLMs require significantly less computing power and energy due to their targeted training. Latency and speed of inference are other areas where SLMs vary. Processing times are accelerated due to their small size. They are attractive to smaller organizations and research groups because of their cost.

Although Microsoft has acknowledged and most reports indicate that these SLMs will be significantly less expensive than the large LLMs, precise pricing information is difficult to come by. Even so, if one were to take the promise at face value, it is possible to envision genAI becoming more accessible to sole proprietors and extremely small enterprises. It’s possible that use cases like creating email subject lines, writing marketing newsletters, or creating social media posts don’t necessarily need the enormous power of an LLM, but we still need to see what these models can do in real-world scenarios.